The AI video generation landscape has undergone a seismic shift in early 2026. What was once a playground of experimental tools producing blurry, artifact-laden clips has matured into a competitive arena where three models now dominate the leaderboards: Happy Horse 1.0, Kling 3.0, and SkyReels V4. Each represents a distinct architectural philosophy, and each excels in specific production scenarios that matter to creators, marketers, and filmmakers. This comprehensive guide dissects their technical foundations, real-world performance, and practical applications to help you choose the right model for your workflow.

The New Benchmark: What Changed in 2026

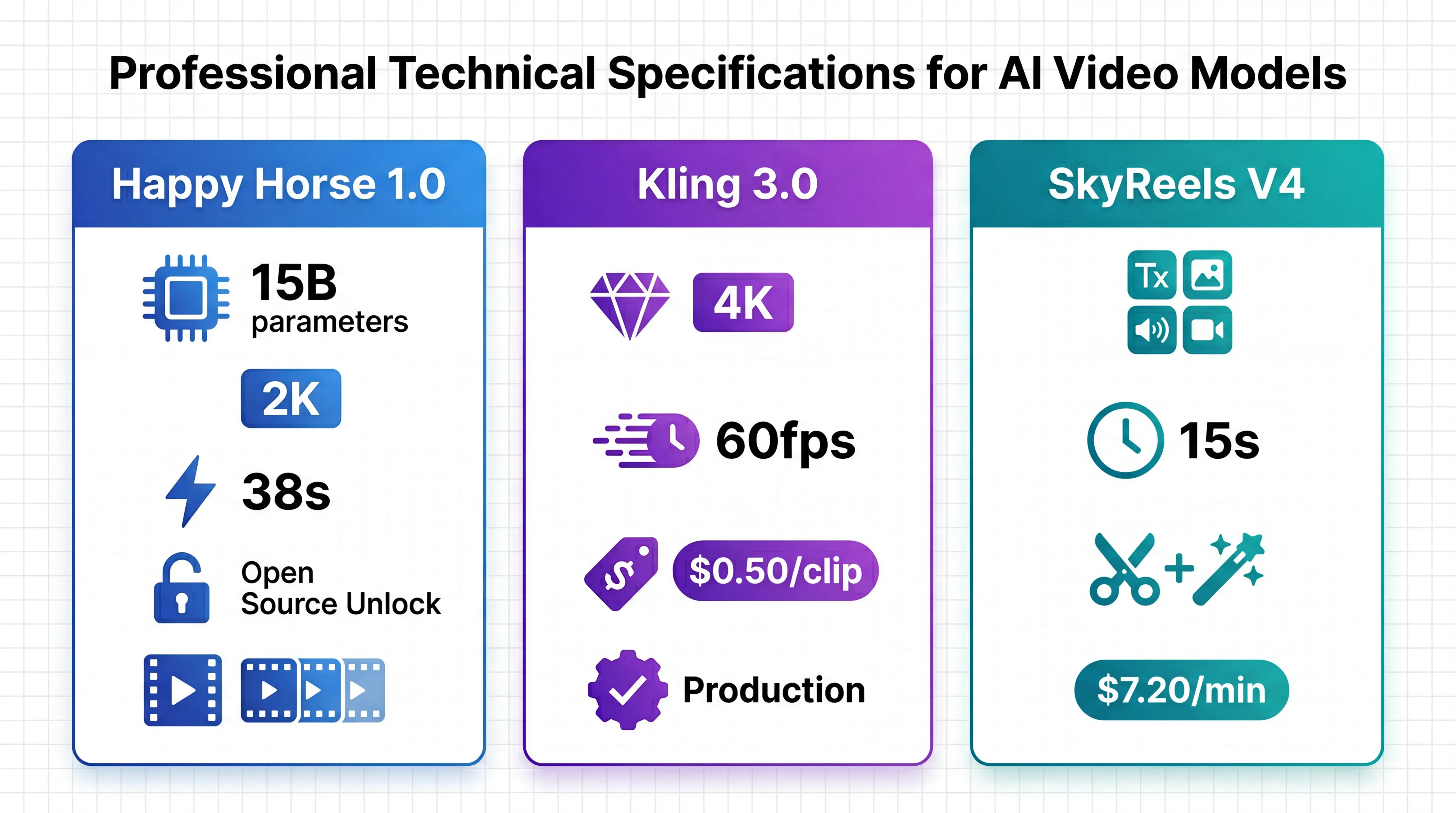

The AI video generation space crossed a critical threshold in early 2026. Native audio-video joint generation, which existed only in research papers twelve months ago, has become a production-ready feature across all three models. Resolution capabilities have leaped forward dramatically. Kling 3.0 now ships native 4K output at 60fps, while Happy Horse 1.0 delivers 2K cinema-grade video in approximately 38 seconds on H100 hardware. Motion quality benchmarks have tightened to the point where independent evaluators score Seedance 2.0 at 9.2 out of 10 for motion realism, with Kling 3.0 following closely at 9.0.

The competitive dynamics have also fundamentally shifted. Happy Horse 1.0 emerged anonymously on the Artificial Analysis Video Arena in early April 2026 and rapidly climbed to the number one position with an Elo rating of 1,361 in text-to-video without audio, surpassing established players like Seedance 2.0 and Kling 3.0. This blind evaluation methodology, where users vote on outputs without knowing which model generated them, provides the most objective quality signal available today. The fact that Happy Horse 1.0 consistently outperformed both Kling 3.0 and SkyReels V4 in side-by-side comparisons reveals genuine architectural advantages rather than marketing hype.

Happy Horse 1.0: The Open-Source Disruptor

Happy Horse 1.0 represents the first serious challenge to closed-source dominance in AI video generation. Built on a 15-billion-parameter unified Transformer architecture with 40-layer self-attention, the model natively processes text, image, video, and audio tokens within a single forward pass. This architectural decision eliminates the synchronization artifacts that plague multi-stage pipelines, where video and audio are generated separately and then aligned in post-processing.

Technical Architecture and Performance

The model employs DMD-2 distillation, a technique that reduces the denoising process to just eight steps without requiring classifier-free guidance. This optimization delivers remarkable inference speeds: approximately two seconds for a five-second clip at 256p resolution, and roughly 38 seconds for full 1080p output on H100 hardware. When compared directly to its closest competitors, Happy Horse 1.0 generates 2K video 30% faster than Seedance 1.5 Pro and 29% faster than Kling 2.1.

The Dual-Branch DiT architecture deserves particular attention. One branch handles visual synthesis while the other generates temporally aligned audio, with both branches sharing a unified text encoder. This design enables the model to maintain persistent character identity across multiple shots, a capability that distinguishes it from single-shot generators like Sora, Runway, or standard Kling implementations. When you prompt Happy Horse 1.0 with a narrative description, it automatically creates coherent scene sequences rather than isolated clips, dramatically reducing the manual editing required to assemble a complete story.

Multilingual Lip-Sync and Audio Capabilities

Happy Horse 1.0 supports native joint audio-video generation in seven languages: Chinese, English, Japanese, Korean, German, French, and Cantonese. The model achieves what its documentation describes as ultra-low WER lip-sync, referring to Word Error Rate, a metric borrowed from speech recognition that measures how accurately lip movements correspond to spoken phonemes. While independent verification of these WER claims remains pending, the arena outputs visible on Artificial Analysis demonstrate convincing synchronization in English and Chinese test cases.

The audio generation extends beyond dialogue to include ambient sounds and Foley effects. When you generate a scene of rain falling on a city street, the model produces not only the visual of water droplets and wet pavement reflections but also the layered soundscape of rainfall, distant traffic, and the specific acoustic signature of water hitting different surfaces. This level of audio-visual integration eliminates an entire post-production workflow step that traditionally required separate sound design tools.

The Open-Source Advantage and Current Limitations

Happy Horse 1.0 ships as a fully open-source package including the base model, distilled model, super-resolution module, and complete inference code. This licensing approach enables self-hosting and fine-tuning for custom use cases, a capability that matters enormously for enterprises with specific brand guidelines, proprietary visual styles, or data sovereignty requirements. The model outperforms Seedance 2.0, Ovi 1.1, and LTX 2.3 on the Artificial Analysis Video Arena leaderboard, establishing it as the highest-quality open-weights option available as of mid-April 2026.

However, a critical gap exists between benchmark performance and production accessibility. As of April 18, 2026, Happy Horse 1.0 has no publicly available API. The official team announced via social media that API access will launch on April 30, 2026, and warned that multiple fraudulent websites have already appeared claiming to offer access. This means the number one model on the global leaderboard remains inaccessible for production workflows, creating a strategic dilemma for teams evaluating their video generation infrastructure today.

Kling 3.0: The Production-Grade Benchmark

Kling 3.0 from Kuaishou has established itself as the production-grade standard for AI video generation through two months of live API availability, comprehensive documentation, and consistent performance at scale. The model operates on a Visual Chain-of-Thought architecture that breaks down complex prompts into sequential reasoning steps, enabling more accurate interpretation of multi-element scenes with specific camera movements, lighting conditions, and character interactions.

Native 4K Output and Motion Quality

Kling 3.0's most visible differentiator is native 4K output at 60 frames per second, the highest native resolution among all major AI video models as of February 2026. This is not upscaled 1080p content but true 3840x2160 rendering without the softness and artifacts that characterize post-generation resolution enhancement. The 60fps frame rate eliminates the AI stutter found in earlier 24fps and 30fps models, making Kling 3.0 particularly effective for high-speed action sequences, sports content, and professional product demonstrations where motion clarity directly impacts perceived quality.

Independent benchmarks consistently rank Kling 3.0 among the top performers for motion quality. Professional videographers have described it as arguably the most capable general-purpose video model available right now and state-of-the-art overall for natural movement and physics simulation. The model excels at scenarios requiring realistic physical interaction: objects colliding, liquids pouring, and fabric responding to wind, where earlier models produced visually implausible results.

Cost Efficiency and Production Scalability

At approximately $0.50 per clip, Kling 3.0 represents the most cost-effective option for high-volume production among top-tier models. When accessed through API providers like ModelsLab, pricing runs approximately $0.12 to $0.15 per second of generated video, meaning a five-second clip costs roughly $0.60 to $0.75. Bulk pricing is available for high-volume users, making Kling 3.0 particularly attractive for marketing agencies, social media content teams, and e-commerce platforms that need to generate dozens or hundreds of product videos monthly.

User-reported generation times range from two to fifteen minutes depending on prompt complexity and server load. While this is slower than Happy Horse 1.0's claimed 38-second generation time for 1080p, the difference matters less in batch production workflows where multiple clips are queued simultaneously. The API stability and predictable pricing structure have made Kling 3.0 the infrastructure backbone for numerous production applications that require consistent quality at scale.

Motion Control and Advanced Features

Kling 3.0 offers a Motion Control feature that deserves specific attention. Users can upload a reference video, extract its motion pattern, and apply that movement signature to entirely different subjects. For example, you might record a specific camera dolly movement through a physical space, extract that motion profile, and then apply it to an AI-generated fantasy landscape. This capability bridges the gap between traditional cinematography techniques and AI generation, enabling directors to maintain precise creative control over camera work while leveraging AI for visual content creation.

The Kling 3.0 Omni variant extends the base model with enhanced multimodal capabilities, supporting voice cloning where users bind a specific voice profile to a character before generation. This ensures consistent vocal characteristics across multiple scenes, which matters enormously for narrative content, branded characters, and educational series where voice recognition aids viewer comprehension and engagement.

SkyReels V4: The Unified Multimodal Foundation

SkyReels V4 from Kunlun represents a fundamentally different architectural approach. Rather than optimizing for a single generation pass, the model adopts a dual-stream Multimodal Diffusion Transformer architecture where one branch synthesizes video and the other generates temporally aligned audio, while both share a powerful text encoder based on Multimodal Large Language Models. This design enables SkyReels V4 to accept the richest set of multimodal instructions among all three models: text, images, video clips, masks, and audio references can be combined in arbitrary configurations to precisely control scene composition, character appearance, and sonic atmosphere.

Unified Generation, Inpainting, and Editing

SkyReels V4's defining characteristic is its unified treatment of generation, inpainting, and editing within a single architecture. The model employs a channel-concatenation formulation that handles image-to-video conversion, video extension, and surgical video editing under one interface. This means you can generate a base scene, then use mask-based inpainting to swap out specific elements, changing a character's outfit, removing a watermark, or replacing a background, without regenerating the entire clip.

The practical implications are substantial. Traditional video generation workflows require separate tools for creation and modification: you generate in one system, export, edit in another application, and hope the edits blend seamlessly. SkyReels V4 collapses this pipeline into a single environment where modifications occur within the same latent space as the original generation, ensuring visual consistency and eliminating format conversion artifacts.

Resolution, Duration, and Efficiency Strategy

SkyReels V4 supports up to 1080p resolution, 32 frames per second, and 15-second duration, the longest single-generation duration among the three models compared here. To make this high-resolution, long-duration generation computationally feasible, the model employs a clever efficiency strategy. It jointly generates low-resolution full sequences and high-resolution keyframes, then applies dedicated super-resolution and frame interpolation models to produce the final output.

This keyframe-plus-superresolution approach adds processing steps compared to direct generation, which impacts total generation time. However, it enables the model to maintain temporal consistency across longer durations than would be possible with direct 1080p generation given current hardware constraints. For creators producing narrative content, explainer videos, or tutorial sequences where 15-second continuous shots are valuable, this trade-off favors SkyReels V4.

Leaderboard Performance and Accessibility

SkyReels V4 secured the number two position on the Artificial Analysis Global Text-to-Video with Audio Leaderboard shortly after its March 2026 release, demonstrating competitive quality against established models. In text-to-video without audio, SkyReels V4 sits at Elo 1,244, just one point above Kling 3.0 Pro's 1,243. This near-parity in blind evaluation suggests that quality differences between these models are narrowing to the point where workflow integration, pricing structure, and specific feature requirements become the primary decision factors.

SkyReels V4 is accessible through API providers with pricing around $7.20 per minute of generated video, positioning it between PixVerse V6 at $5.40 per minute and Kling 3.0 Pro at $13.44 per minute. This pricing structure offers what multiple independent evaluators describe as the best quality-to-price ratio among accessible models as of April 2026.

Head-to-Head Comparison: Technical Specifications

| Specification | Happy Horse 1.0 | Kling 3.0 | SkyReels V4 |

|---|---|---|---|

| Architecture | 15B-parameter unified Transformer, 40-layer self-attention, Dual-Branch DiT | Visual Chain-of-Thought, diffusion-based pipeline | Dual-stream Multimodal Diffusion Transformer (MMDiT) |

| Resolution | Up to 2K (1080p native) | Native 4K (3840x2160) | Up to 1080p |

| Frame Rate | Standard (30fps implied) | 60fps | 32fps |

| Max Duration | Multi-shot sequences | 10-15 seconds per clip | 15 seconds |

| Audio Generation | Native joint synthesis, 7 languages | Native with voice cloning | Native joint synthesis |

| Inference Speed | ~38s for 1080p (H100) | 2-15 minutes (varies by load) | Longer (keyframe + SR approach) |

| API Availability | Coming April 30, 2026 | Live since February 2026 | Live since March 2026 |

| Pricing | TBA | ~$0.50/clip, $0.12-0.15/sec | ~$7.20/min |

| Open Source | Yes (full model + code) | No | Partial (weights status unclear) |

| Elo Rating (T2V no audio) | 1,361 | 1,247 | 1,244 |

Use Case Recommendations: Which Model for Your Workflow

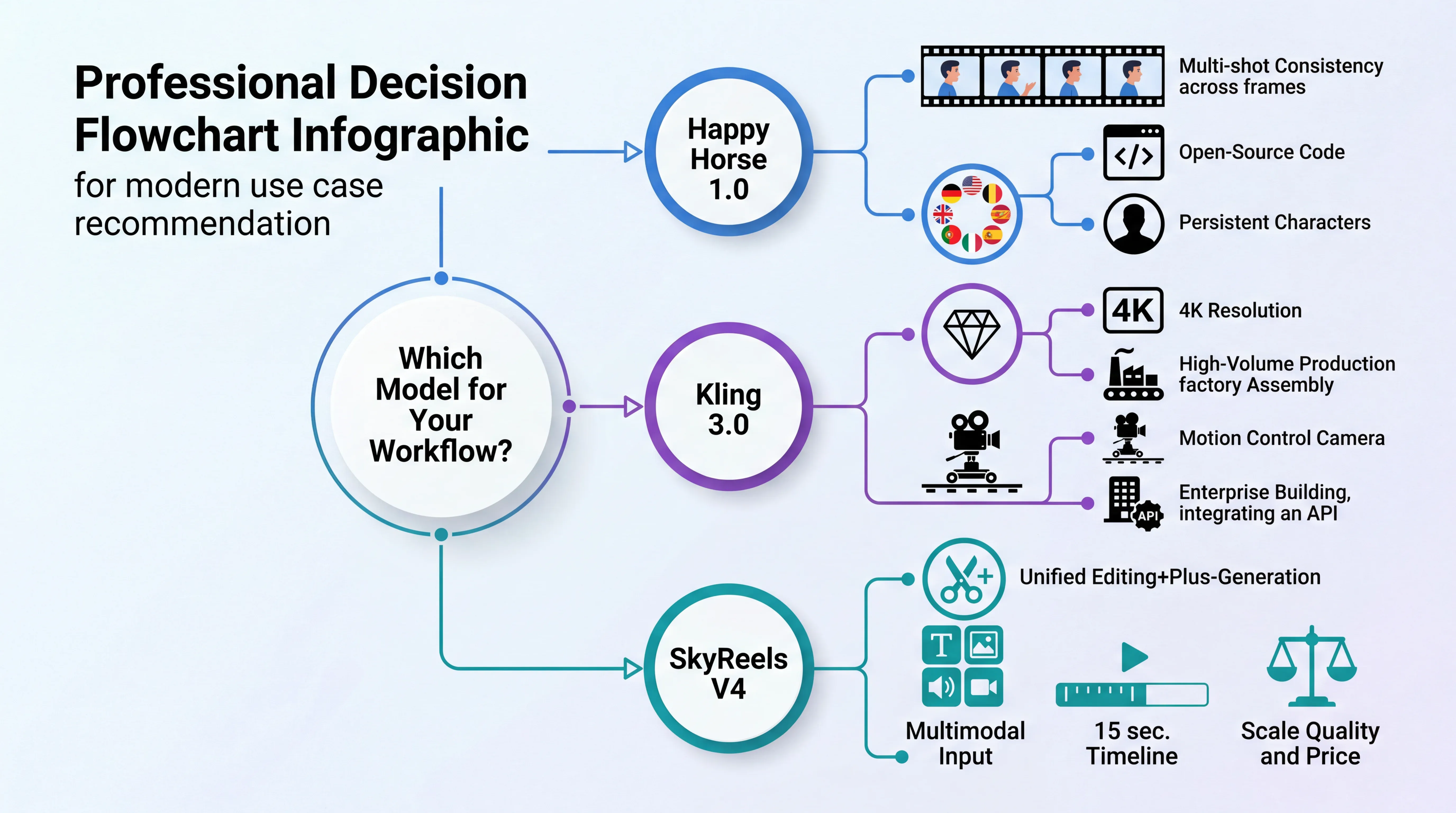

Choose Happy Horse 1.0 If You Need

Multi-shot narrative content with persistent characters. Happy Horse 1.0's ability to maintain character identity across scene transitions makes it uniquely suited for short films, branded storytelling, and educational series where visual continuity matters more than maximum resolution. The native multi-shot generation eliminates the manual editing required to assemble coherent sequences from isolated clips.

Self-hosted infrastructure with full model control. Enterprises with data sovereignty requirements, proprietary visual styles requiring fine-tuning, or workflows that demand on-premise processing will find Happy Horse 1.0's open-source licensing essential. The ability to modify the model architecture and training process enables customization impossible with API-only services.

Multilingual content with synchronized dialogue. The seven-language lip-sync capability makes Happy Horse 1.0 the strongest choice for international content creators, language learning applications, and global marketing campaigns where accurate lip synchronization in multiple languages reduces localization costs.

Patience for API access. If your production timeline extends beyond April 30, 2026, and you can wait for official API availability, Happy Horse 1.0's benchmark-leading quality justifies the delay. For immediate production needs, Kling 3.0 or SkyReels V4 remain the practical choices.

Choose Kling 3.0 If You Need

Maximum resolution and motion clarity. When 4K output at 60fps is non-negotiable, for professional product demonstrations, high-end advertising, festival-quality short films, or any content where visual fidelity directly impacts perceived brand value, Kling 3.0's native resolution advantage outweighs all other considerations.

High-volume production at scale. Marketing agencies generating dozens of social media clips daily, e-commerce platforms creating product videos for thousands of SKUs, and content studios producing recurring series will benefit from Kling 3.0's cost efficiency, API stability, and batch processing capabilities. The $0.50 per clip pricing makes large-scale production economically viable.

Precise motion control and cinematography. Directors and cinematographers who want to apply specific camera movements, maintain consistent motion signatures across multiple shots, or integrate AI-generated content with traditionally filmed sequences will find Kling 3.0's Motion Control feature indispensable. This capability bridges professional filmmaking techniques with AI generation in ways the other models do not currently support.

Proven production infrastructure. Teams building customer-facing applications, SaaS products with embedded video generation, or automated content pipelines require the operational reliability that comes from two months of live API availability, comprehensive documentation, and multiple provider options. Kling 3.0 has become production infrastructure in a way Happy Horse 1.0 and SkyReels V4 have not yet achieved.

Choose SkyReels V4 If You Need

Unified generation and editing workflows. Content creators who frequently modify generated videos, swapping backgrounds, changing character outfits, removing unwanted elements, or extending scenes, will benefit enormously from SkyReels V4's integrated inpainting and editing capabilities. The ability to make surgical modifications within the same latent space as the original generation maintains visual consistency impossible with external editing tools.

Complex multimodal conditioning. Projects requiring precise control through combinations of text descriptions, reference images, video clips, masks, and audio guidance will leverage SkyReels V4's rich input modality support. This matters for branded content with strict visual guidelines, character-driven narratives requiring specific appearances and voices, and technical demonstrations where exact scene composition is critical.

Longer continuous shots. The 15-second maximum duration makes SkyReels V4 the strongest choice for explainer videos, tutorial content, narrative scenes requiring extended takes, and any application where cutting between multiple shorter clips would disrupt viewer comprehension or emotional engagement.

Best quality-to-price ratio among accessible options. For teams evaluating cost efficiency across the full production pipeline, including generation, editing, and iteration cycles, SkyReels V4's $7.20 per minute pricing combined with integrated editing capabilities often results in lower total cost than cheaper generation-only services that require separate editing tools and additional iteration rounds.

Performance Benchmarks: Motion Quality and Physics Simulation

Independent testing across real production workflows reveals nuanced performance differences that matter more than aggregate Elo scores for specific use cases. Based on evaluations conducted in February 2026, Seedance 2.0 scores highest for motion realism at 9.2 out of 10, followed closely by Kling 3.0 at 9.0. Seedance excels in cinematic motion smoothing, the subtle acceleration and deceleration curves that make camera movements feel professionally operated rather than mechanically linear. Kling 3.0 leads in natural physics simulation, particularly for scenarios involving gravity, momentum, and material properties like fabric dynamics or liquid behavior.

Happy Horse 1.0's motion quality has been validated through blind arena comparisons where users consistently ranked its outputs above both Kling 3.0 and Seedance 2.0 without knowing which model generated each clip. This suggests that Happy Horse 1.0 achieves motion quality that surpasses even the 9.0 to 9.2 benchmark scores, though formal numerical ratings remain pending independent laboratory testing.

SkyReels V4's motion quality sits competitively within this top tier, with its Elo rating of 1,244 placing it just one point above Kling 3.0 Pro. The practical difference at this level of performance is that all three models avoid the obvious artifacts, morphing objects, drifting faces, and physics violations, that plagued earlier generations. The remaining quality gaps manifest in subtle ways: how naturally a character's weight shifts during a turn, whether water droplets catch light convincingly, and how fabric folds respond to body movement. These nuances matter enormously for high-end commercial work but may be imperceptible in social media content viewed on mobile devices.

The Happy Horse Platform Advantage

While this comparison has focused on the Happy Horse 1.0 model itself, it is worth noting that Happy Horse offers a unified platform approach that addresses a critical pain point in AI video production: tool fragmentation. Rather than managing separate subscriptions to Kling for 4K output, Seedance for motion quality, and SkyReels for editing capabilities, Happy Horse provides integrated access to multiple leading models within a single workflow environment. This consolidation matters for several practical reasons.

First, it eliminates the cognitive overhead of learning different interfaces, prompt syntaxes, and parameter systems for each model. A creator can test the same prompt across Happy Horse 1.0, Kling 3.0, and SkyReels V4 without switching platforms, enabling rapid iteration and direct quality comparison. Second, it simplifies billing and budget management: one subscription, one invoice, and predictable costs, rather than tracking usage across multiple services with different pricing structures. Third, it enables workflow optimization where you match the right model to each creative challenge: use Kling 3.0 for the hero shot requiring maximum resolution, Happy Horse 1.0 for the narrative sequence requiring character consistency, and SkyReels V4 for the scene requiring surgical editing.

This platform approach reflects a broader industry trend toward AI model aggregation. Just as no single large language model dominates every text generation task, no single video generation model will excel at every creative challenge. The future of professional AI video production likely involves intelligent model routing where the system automatically selects, or recommends, the optimal model based on prompt analysis, budget constraints, and quality requirements. Happy Horse's integrated platform positions it well for this multi-model future.

Limitations and Considerations Across All Models

Despite the remarkable progress in early 2026, all three models share certain limitations that creators must understand before committing to production workflows. Physics simulation for complex scenarios, including fire behavior, water dynamics involving multiple interacting streams, and cloth tearing, occasionally breaks down in ways that produce visually implausible results. Multi-character interactions, particularly scenes with three or more characters engaged in coordinated actions, tend to produce visual artifacts where limbs intersect incorrectly or spatial relationships become confused.

Cross-video character consistency remains an unsolved challenge. While Happy Horse 1.0 maintains character identity within a single multi-shot generation, and SkyReels V4 can use reference images to guide character appearance, no model yet reliably produces the same character across entirely separate generation sessions without careful prompt engineering and reference image management. This limitation matters for series content, recurring branded characters, and any application requiring a consistent cast across multiple episodes or campaigns.

The copyright landscape also demands attention. In March 2026, the US Supreme Court declined to hear the appeal in Thaler v. Perlmutter, effectively upholding the ruling that purely AI-generated content is not eligible for copyright protection. This means anyone can legally copy and use your AI-generated videos, and you cannot claim copyright ownership. For businesses whose models depend on content exclusivity, this represents a strategic risk requiring mitigation through human creative input, substantial post-production modification, or alternative legal protections like trademark and trade dress.

Conclusion: The Multi-Model Future of AI Video Production

The competition between Happy Horse 1.0, Kling 3.0, and SkyReels V4 reveals a maturing industry where quality differences have narrowed to the point that workflow integration, specific feature requirements, and cost structure often matter more than raw benchmark scores. Happy Horse 1.0 leads in blind quality evaluations and offers unmatched open-source flexibility, but lacks production API access until late April 2026. Kling 3.0 provides the highest native resolution, proven infrastructure reliability, and the most cost-effective pricing for high-volume production. SkyReels V4 delivers the richest multimodal input support, integrated editing capabilities, and the longest single-generation duration.

For most production teams, the optimal strategy is not choosing a single model but rather developing workflows that leverage each model's strengths. Use Kling 3.0 for hero shots and high-resolution deliverables. Deploy Happy Horse 1.0 for narrative sequences requiring character consistency once API access launches. Apply SkyReels V4 for content requiring surgical editing and complex multimodal conditioning. This multi-model approach, facilitated by platforms like Happy Horse that integrate multiple models within a unified interface, represents the pragmatic path forward in an industry where no single solution dominates every use case.

The AI video generation space will continue evolving rapidly through 2026. Quality benchmarks that define state-of-the-art today will likely be mid-tier by Q3 2026. The models compared here will release updated versions, new competitors will emerge, and architectural innovations will shift performance hierarchies. What remains constant is the need for creators to match tool capabilities to specific creative challenges, maintain workflow flexibility, and prioritize production reliability over benchmark rankings. The future of AI video is not a single winning model but an ecosystem of specialized tools, and the winners will be those who learn to orchestrate them effectively.

Ready to experience the future of AI video generation? Visit to access Happy Horse 1.0, Kling 3.0, SkyReels V4, and other leading models in one unified platform. Create your first cinematic video today with our integrated workflow tools and intelligent model routing.